- prediction/image/ FastAPI 서버 Docker 환경 구성 - Dockerfile: PyTorch 2.1 + CUDA 12.1 기반 GPU 이미지 - docker-compose.yml: GPU 할당 + 데이터 볼륨 마운트 - requirements.txt: 서버 의존성 목록 - .env.example: 환경변수 템플릿 - DOCKER_USAGE.md: 빌드/실행/API 사용법 문서 - Dockerfile에 .dockerignore 제외 폴더 mkdir -p 추가 - .gitignore: prediction/image 결과물 및 모델 가중치(.pth) 제외 추가 - dbInsert_csv.py, dbInsert_shp.py 삭제 (미사용 DB 로직) - api.py: dbInsert import 및 주석 처리된 DB 호출 코드 제거 - aerialRouter.ts: req.params 타입 오류 수정

12 KiB

Twins

Twins: Revisiting the Design of Spatial Attention in Vision Transformers

Introduction

Abstract

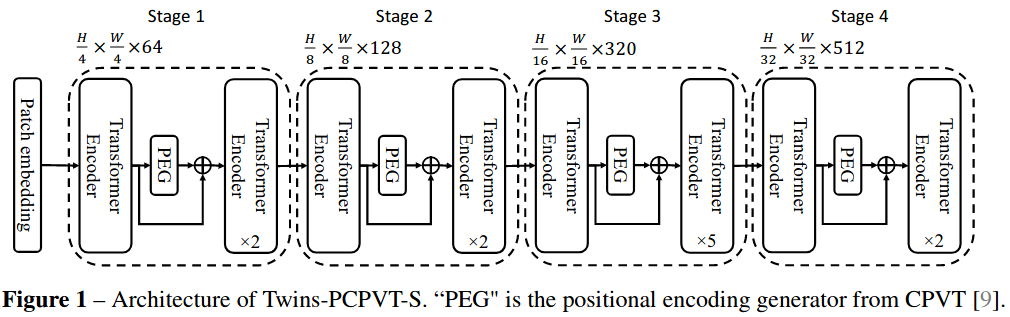

Very recently, a variety of vision transformer architectures for dense prediction tasks have been proposed and they show that the design of spatial attention is critical to their success in these tasks. In this work, we revisit the design of the spatial attention and demonstrate that a carefully-devised yet simple spatial attention mechanism performs favourably against the state-of-the-art schemes. As a result, we propose two vision transformer architectures, namely, Twins-PCPVT and Twins-SVT. Our proposed architectures are highly-efficient and easy to implement, only involving matrix multiplications that are highly optimized in modern deep learning frameworks. More importantly, the proposed architectures achieve excellent performance on a wide range of visual tasks, including image level classification as well as dense detection and segmentation. The simplicity and strong performance suggest that our proposed architectures may serve as stronger backbones for many vision tasks. Our code is released at this https URL.

Citation

@article{chu2021twins,

title={Twins: Revisiting spatial attention design in vision transformers},

author={Chu, Xiangxiang and Tian, Zhi and Wang, Yuqing and Zhang, Bo and Ren, Haibing and Wei, Xiaolin and Xia, Huaxia and Shen, Chunhua},

journal={arXiv preprint arXiv:2104.13840},

year={2021}altgvt

}

Usage

We have provided pretrained models converted from official repo.

If you want to convert keys on your own to use official repositories' pre-trained models, we also provide a script twins2mmseg.py in the tools directory to convert the key of models from the official repo to MMSegmentation style.

python tools/model_converters/twins2mmseg.py ${PRETRAIN_PATH} ${STORE_PATH} ${MODEL_TYPE}

This script convert pcpvt or svt pretrained model from PRETRAIN_PATH and store the converted model in STORE_PATH.

For example,

python tools/model_converters/twins2mmseg.py ./alt_gvt_base.pth ./pretrained/alt_gvt_base.pth svt

Results and models

ADE20K

| Method | Backbone | Crop Size | Lr schd | Mem (GB) | Inf time (fps) | mIoU | mIoU(ms+flip) | config | download |

|---|---|---|---|---|---|---|---|---|---|

| Twins-FPN | PCPVT-S | 512x512 | 80000 | 6.60 | 27.15 | 43.26 | 44.11 | config | model | log |

| Twins-UPerNet | PCPVT-S | 512x512 | 160000 | 9.67 | 14.24 | 46.04 | 46.92 | config | model | log |

| Twins-FPN | PCPVT-B | 512x512 | 80000 | 8.41 | 19.67 | 45.66 | 46.48 | config | model | log |

| Twins-UPerNet (8x2) | PCPVT-B | 512x512 | 160000 | 6.46 | 12.04 | 47.91 | 48.64 | config | model | log |

| Twins-FPN | PCPVT-L | 512x512 | 80000 | 10.78 | 14.32 | 45.94 | 46.70 | config | model | log |

| Twins-UPerNet (8x2) | PCPVT-L | 512x512 | 160000 | 7.82 | 10.70 | 49.35 | 50.08 | config | model | log |

| Twins-FPN | SVT-S | 512x512 | 80000 | 5.80 | 29.79 | 44.47 | 45.42 | config | model | log |

| Twins-UPerNet (8x2) | SVT-S | 512x512 | 160000 | 4.93 | 15.09 | 46.08 | 46.96 | config | model | log |

| Twins-FPN | SVT-B | 512x512 | 80000 | 8.75 | 21.10 | 46.77 | 47.47 | config | model | log |

| Twins-UPerNet (8x2) | SVT-B | 512x512 | 160000 | 6.77 | 12.66 | 48.04 | 48.87 | config | model | log |

| Twins-FPN | SVT-L | 512x512 | 80000 | 11.20 | 17.80 | 46.55 | 47.74 | config | model | log |

| Twins-UPerNet (8x2) | SVT-L | 512x512 | 160000 | 8.41 | 10.73 | 49.65 | 50.63 | config | model | log |

Note:

8x2means 8 GPUs with 2 samples per GPU in training. Default setting of Twins on ADE20K is 8 GPUs with 4 samples per GPU in training.UPerNetandFPNare decoder heads utilized in corresponding Twins model, which isUPerHeadandFPNHead, respectively. Specifically, models in official repo all useUPerHead.