- prediction/image/ FastAPI 서버 Docker 환경 구성 - Dockerfile: PyTorch 2.1 + CUDA 12.1 기반 GPU 이미지 - docker-compose.yml: GPU 할당 + 데이터 볼륨 마운트 - requirements.txt: 서버 의존성 목록 - .env.example: 환경변수 템플릿 - DOCKER_USAGE.md: 빌드/실행/API 사용법 문서 - Dockerfile에 .dockerignore 제외 폴더 mkdir -p 추가 - .gitignore: prediction/image 결과물 및 모델 가중치(.pth) 제외 추가 - dbInsert_csv.py, dbInsert_shp.py 삭제 (미사용 DB 로직) - api.py: dbInsert import 및 주석 처리된 DB 호출 코드 제거 - aerialRouter.ts: req.params 타입 오류 수정

7.8 KiB

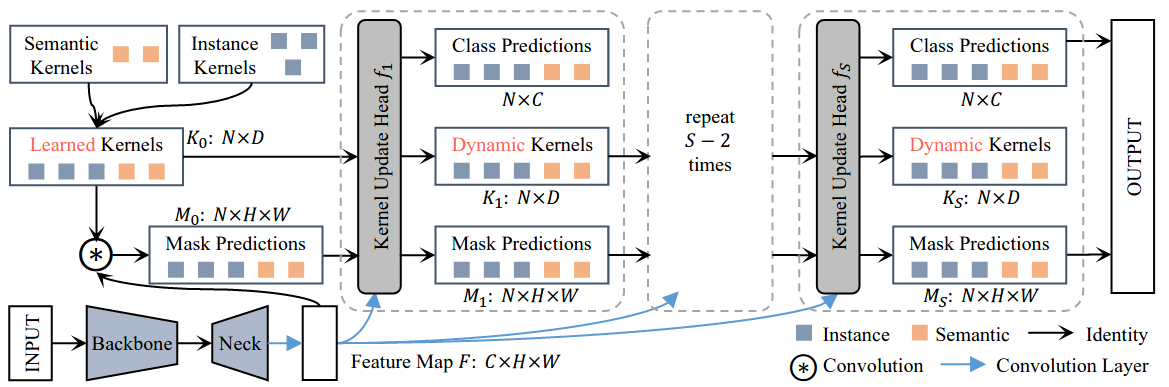

K-Net

K-Net: Towards Unified Image Segmentation

Introduction

Abstract

Semantic, instance, and panoptic segmentations have been addressed using different and specialized frameworks despite their underlying connections. This paper presents a unified, simple, and effective framework for these essentially similar tasks. The framework, named K-Net, segments both instances and semantic categories consistently by a group of learnable kernels, where each kernel is responsible for generating a mask for either a potential instance or a stuff class. To remedy the difficulties of distinguishing various instances, we propose a kernel update strategy that enables each kernel dynamic and conditional on its meaningful group in the input image. K-Net can be trained in an end-to-end manner with bipartite matching, and its training and inference are naturally NMS-free and box-free. Without bells and whistles, K-Net surpasses all previous published state-of-the-art single-model results of panoptic segmentation on MS COCO test-dev split and semantic segmentation on ADE20K val split with 55.2% PQ and 54.3% mIoU, respectively. Its instance segmentation performance is also on par with Cascade Mask R-CNN on MS COCO with 60%-90% faster inference speeds. Code and models will be released at this https URL.

@inproceedings{zhang2021knet,

title={{K-Net: Towards} Unified Image Segmentation},

author={Wenwei Zhang and Jiangmiao Pang and Kai Chen and Chen Change Loy},

year={2021},

booktitle={NeurIPS},

}

Results and models

ADE20K

| Method | Backbone | Crop Size | Lr schd | Mem (GB) | Inf time (fps) | mIoU | mIoU(ms+flip) | config | download |

|---|---|---|---|---|---|---|---|---|---|

| KNet + FCN | R-50-D8 | 512x512 | 80000 | 7.01 | 19.24 | 43.60 | 45.12 | config | model | log |

| KNet + PSPNet | R-50-D8 | 512x512 | 80000 | 6.98 | 20.04 | 44.18 | 45.58 | config | model | log |

| KNet + DeepLabV3 | R-50-D8 | 512x512 | 80000 | 7.42 | 12.10 | 45.06 | 46.11 | config | model | log |

| KNet + UPerNet | R-50-D8 | 512x512 | 80000 | 7.34 | 17.11 | 43.45 | 44.07 | config | model | log |

| KNet + UPerNet | Swin-T | 512x512 | 80000 | 7.57 | 15.56 | 45.84 | 46.27 | config | model | log |

| KNet + UPerNet | Swin-L | 512x512 | 80000 | 13.5 | 8.29 | 52.05 | 53.24 | config | model | log |

| KNet + UPerNet | Swin-L | 640x640 | 80000 | 13.54 | 8.29 | 52.21 | 53.34 | config | model | log |

Note:

- All experiments of K-Net are implemented with 8 V100 (32G) GPUs with 2 samplers per GPU.